Introduction

Artificial Intelligence has changed industries. It helps with automation, personalization and making decisions. By 2026 AI will be a part of our daily lives. It will help with hiring, finance, healthcare and content suggestions.. With these benefits come some important questions about ethics. • AI ethics is about making sure AI systems are designed and used in a way.

● It should be fair and transparent. As AI gets more powerful the risks of it being. Being biased increase. Understanding AI ethics is crucial for everyone. This includes individuals, businesses and policymakers.

What is AI Ethics

The goal is to make sure AI benefits society without causing harm. This guide will explore the challenges and principles of AI. AI ethics is about the rules that govern AI. It aims to make AI fair, transparent and aligned with values.

● Ethical AI involves fixing issues like bias and privacy. • It requires teamwork between developers organizations and regulators. Why AI Ethics is Important By considering ethics AI can help society while minimizing risks. The importance of AI ethics is huge. AI decisions can affect peoples lives in ways.

● It can determine job opportunities and financial access. • It can also affect healthcare outcomes. Key Ethical Challenges in AI

- Bias and Fairness Without guidelines AI systems can be biased. • They can violate privacy. Create problems.

Ensuring AI is ethical helps build trust. • It protects users. Promotes fairness.

-

Privacy Concerns It also supports innovation by addressing risks. AI systems can be biased if the data is biased. • This can lead to outcomes.

-

Transparency and Explainability Addressing bias requires data selection. • It also requires testing and monitoring. AI systems use a lot of data.

-

Accountability ● This raises concerns about privacy. Protecting information is essential. • It prevents misuse. Ensures trust.

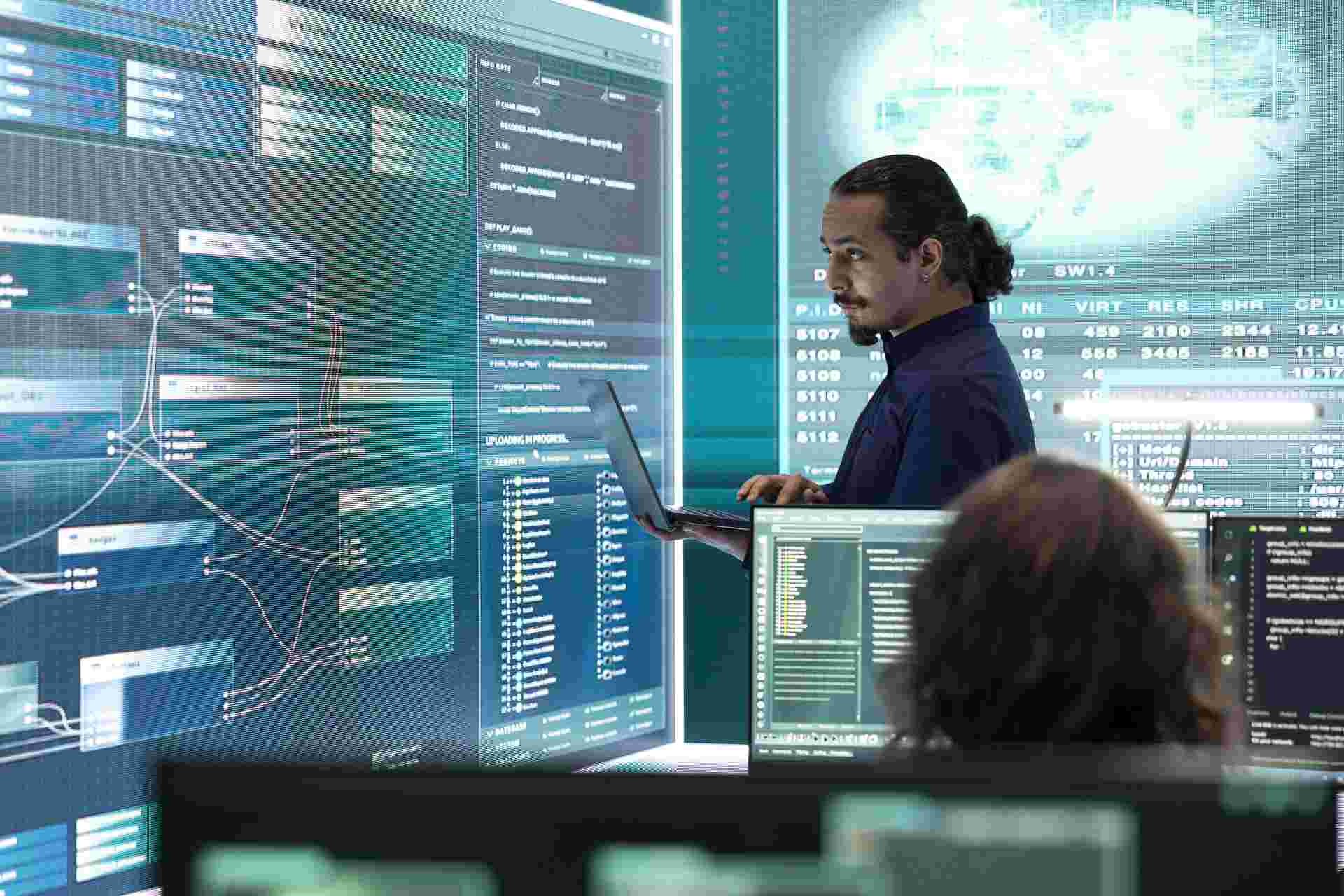

- Security Risks Some AI models are hard to understand. • Transparency is crucial for accountability.

Principles of Ethical AI

-

Fairness It's hard to determine who is responsible for AI decisions. • Clear accountability frameworks are needed.

-

Transparency AI systems can be vulnerable to attacks. • Ensuring security is essential.

-

Accountability AI systems should provide outcomes. • Decisions made by AI should be understandable.

-

Privacy Organizations must take responsibility for AI systems. • User data should be protected.

-

Safety AI systems should operate reliably and securely. AI ethics issues have been seen in areas.

Real-World Examples of AI Ethics Issues

● In hiring systems biased algorithms have led to candidate selection. In recognition inaccuracies have resulted in misidentification. ● Social media platforms have faced criticism for using AI to promote content. These examples highlight the importance of ethics, in AI. AI Ethics vs AI Regulation

Do’s Don’ts

Frequently Asked Questions

What is AI ethics?

The guidelines for AI development and use are really important.

Why is AI ethics important?

They make sure that AI is fair and that people can trust it.

What are common AI ethics issues?

The big problems with AI are bias. Not being transparent about what is going on.

How can bias in AI be reduced?

Also people get really upset when their privacy is not protected.

What is explainable AI?

To fix these problems we need to use lots of datasets and keep a close eye on the systems.

Is AI regulation necessary?

This way AI systems can make decisions that people can understand.

Can AI be completely unbiased?

We have to make sure that people follow the rules and are responsible for what they do with AI.

What is the future of AI ethics?

It is true that dealing with bias is tough. We can make it less of a problem.

Get it on

Get it on  Download on the

Download on the